Enterprise AI that stays inside your walls

The private agent platform for building, deploying, and governing AI coworkers—on your infrastructure, with your models, under your control.

The private enterprise alternative to OpenAI Frontier — same agent capabilities, your infrastructure, your data never leaves.

- Your institutional memory never leaves your environment

- Every output is evidence-grade: cited, auditable, exportable

- Any model, any provider—switch without re-architecting

- Governance is architectural, not bolted on

Not ready to go fully private? Strip the data before it leaves.

If your workflows depend on an external AI vendor—OpenAI, Anthropic, or any other—our anonymization layer strips sensitive entities before documents leave your environment. PII, financials, and identifiers are replaced with consistent tokens. The vendor processes clean data. Your originals never move.

How it works

Upload your document — contract, brief, case file, report.

Our anonymization layer detects and replaces all sensitive entities with consistent tokens.

Send the anonymized version to your external vendor. Retrieve the output.

De-anonymize locally. Tokens map back to originals. The vendor never held the key.

Runs entirely inside your environment. No data leaves until you choose to send the anonymized output.

The question isn't whether to deploy AI agents. It's who owns the platform they run on.

Enterprise AI platforms promise to manage your agents, workflows, and institutional knowledge. But most require you to centralize your business context—your operational definitions, your customer data, your decision logic—inside a vendor-operated platform.

That creates three structural risks:

Context Lock-In

The more institutional memory you build in a vendor's context layer, the harder it is to leave. Your operational knowledge becomes their retention mechanism.

Model Dependency

Platforms tied to one model vendor couple your workflows to a single roadmap. Model leadership rotates—your operational platform shouldn't.

Data Exposure

Sending business context to a shared platform means trusting a vendor's data handling for your most sensitive operational workflows. For regulated industries, that trust is a liability.

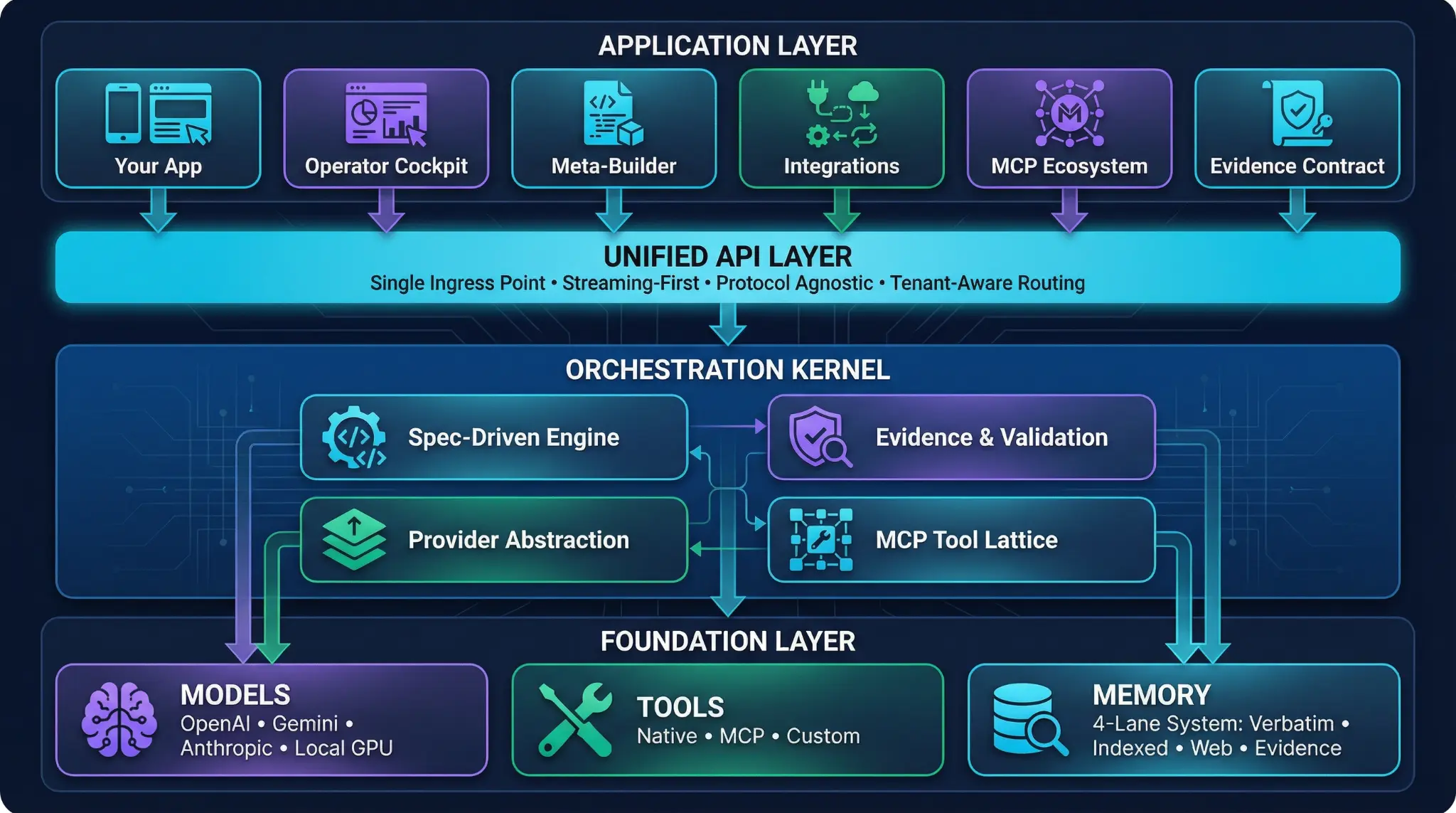

One platform. Four layers. Complete ownership.

SmoothOperator.ai gives you the full enterprise agent stack—context, execution, governance, integration—deployed privately inside your environment.

Private Context Layer

Your institutional memory, inside your boundary. A governed document and knowledge layer (DMS + collections) that scopes what agents can see and act on. Definitions like "customer," "policy," or "case" are consistent across every agent and workflow—without centralizing that knowledge in a vendor's cloud.

Key differentiator: Collection-scoped retrieval with role-based access. Agents only see what they're permissioned to see.

Agent Execution

Multi-agent workflows that run reliably in production. Chat-first execution through ChatKit for complex, multi-step workflows. Structured artifacts—threads, attachments, exports—that operational teams can review, reuse, and audit. Orchestrated teams for complex reasoning; specialized vertical agents for high-speed domain queries.

Key differentiator: Evidence-grade outputs with citation integrity checks. Every answer is cited, verifiable, and exportable as an audit-ready bundle.

Governance & Controls

Governance that's architectural, not a policy layer. RunControls define behavior boundaries per workflow. Role-based access at the collection, agent, and workflow level. Full traceability across tools, agents, and outputs. Built for regulated industries where "the AI said so" isn't sufficient.

Key differentiator: RunControls enforce boundaries at execution time, not after the fact. Compliance is a runtime property, not a reporting function.

Open Integration

Your models. Your tools. Your stack. Model-agnostic, BYOK. Native MCP tool server support behind consistent authentication and allowlists. Agents act across your existing systems without routing through a vendor's orchestration layer. Switching models is a configuration change, not a migration.

Key differentiator: True model independence. No platform-model coupling. The context layer doesn't care which model is running.

Deploy AI coworkers across your operations— governed, grounded, and private.

AI Teammates

Role-aligned agents for analysts, operators, and support teams. Grounded in permissioned collections. Integrated into the tools your people already use. Answers come with sources, not guesses.

Workflow Automation

End-to-end process automation across support, ops, and document-heavy functions. Run as governed "workflows-as-products" with structured inputs, outputs, and audit trails.

Evidence-Backed Deliverables

Audit-ready outputs for regulated and high-stakes environments. Citation integrity checks, structured export bundles, and full traceability from question to answer to source.

Measured outcomes, not projected savings.

reduction in knowledge search time

decrease in escalations to subject-matter experts

data residency within customer boundary

vendor lock-in on models, context, or governance

Based on outcomes from pilot deployments across regulated industries. Individual results vary by deployment scope and use case.

Built for the environments where "trust us" isn't sufficient.

SOC 2 Type II certified. GDPR compliant. Air-gap deployable. Full audit trail from input to output to source. Designed for regulated industries, security-conscious organizations, and any enterprise that treats data sovereignty as non-negotiable.

SOC 2 Type II

Certified security controls

GDPR Compliant

EU data protection ready

Air-Gap Deploy

Fully on-premises option

Full Audit Trail

Complete observability

Your agents. Your data. Your control plane.

See how SmoothOperator.ai deploys enterprise AI without ceding control of your institutional memory.